Backend

-

Deploying Node JS Application with AWS App Runner

AWS App Runner is a fully managed service provided by Amazon Web Services (AWS). It makes it easy for developers to quickly deploy containerized web…

-

Send SMS in NodeJS using Nexmo

Hi, As I’m a JS enthusiast; I love doing JavaScript development due to its easiness & lot of people there to help. Today, I have…

-

Annotation & their uses in Java

Hello, I’m newbie to Java world & so new to Spring Boot. So, I don’t have a prior experience with spring also. As a new…

-

Split & Map a String in Java

Hi, everyone. I’m learning the basic concepts of Java since last month. So, while I’m learning I did some example by my own. In future…

-

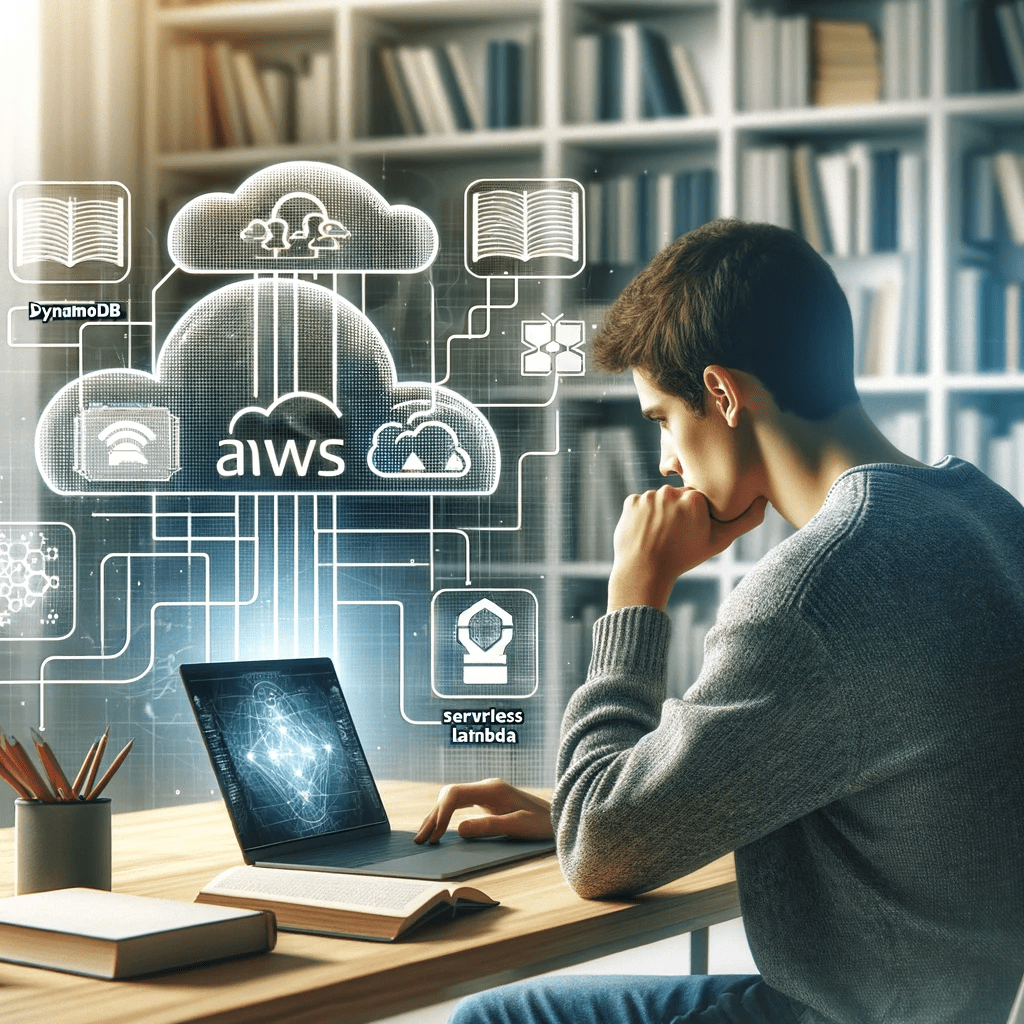

Simple CRUD APP with Dynamo DB – API Gateway – Node JS Serverless with Lambda

Imagine you have a big box of Legos. With these Legos, you can build lots of different things, like houses, cars, and even spaceships. But…