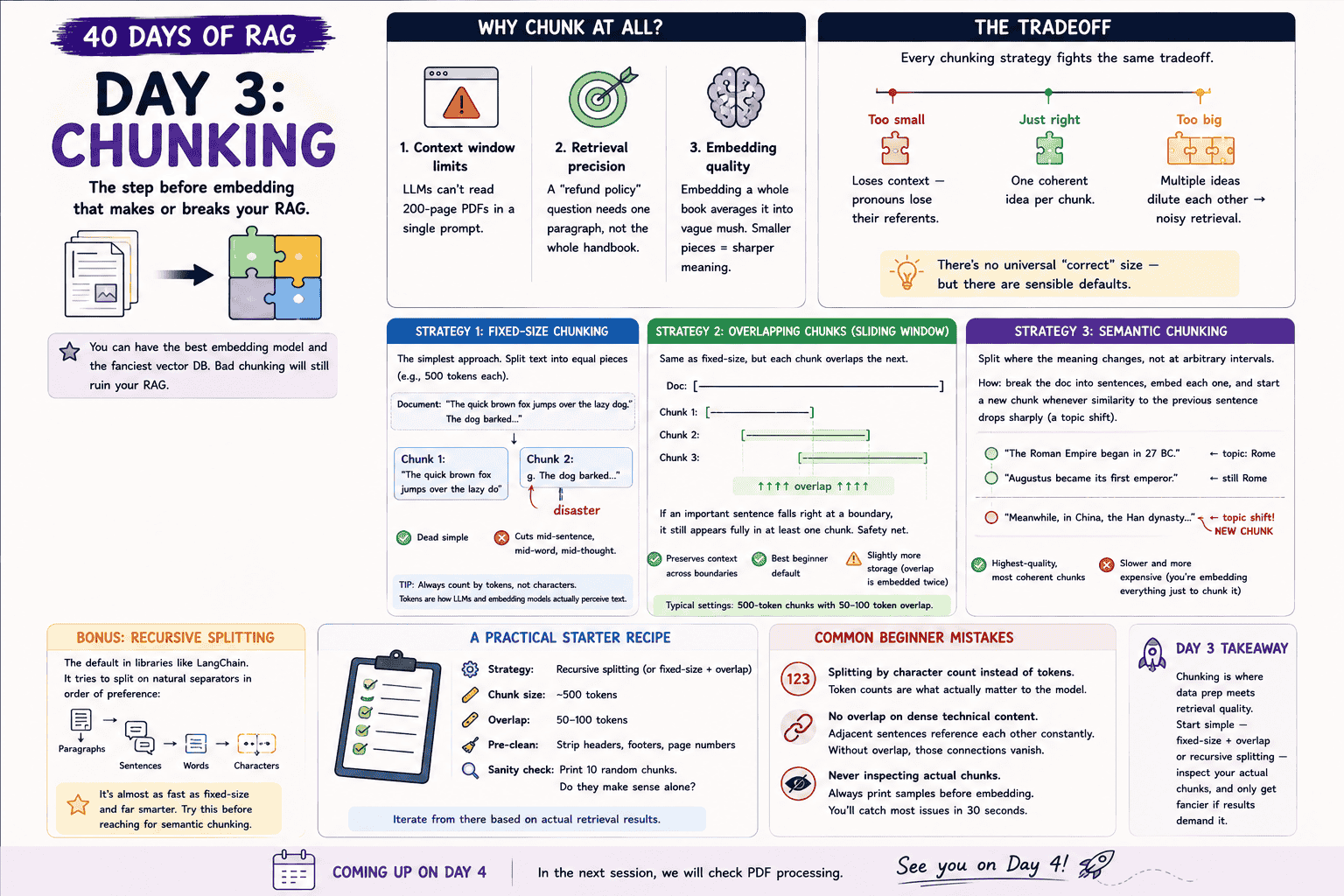

Day 3: Chunking — The Make-or-Break Decision in RAG

Effective RAG systems rely on optimal text chunking. Explore strategies like fixed-size, overlapping, and semantic methods for improved results.

Parathan Thiyagalingam

Parathan Thiyagalingam

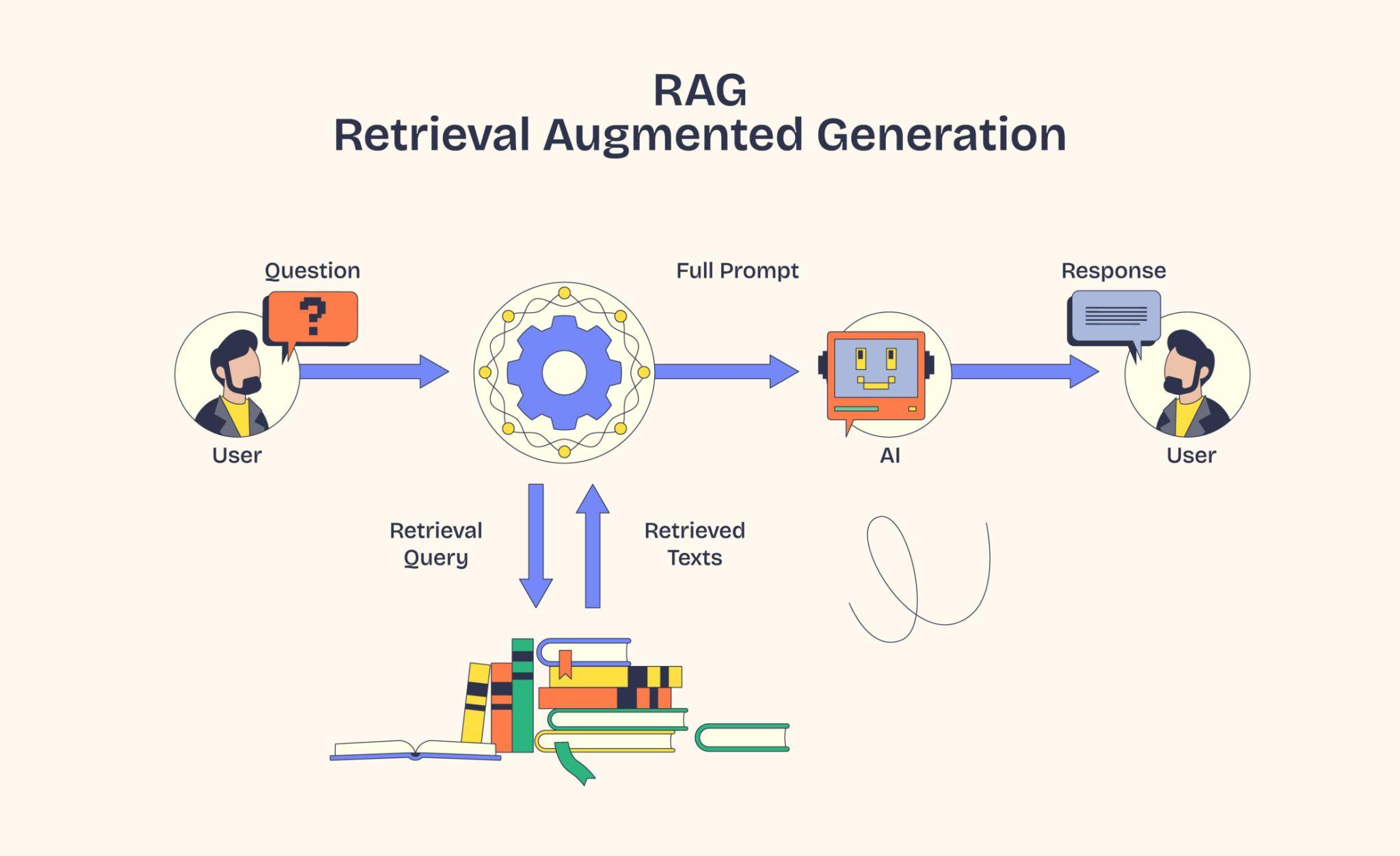

Day 2 turned text into embeddings and packed them into a vector database. But there is a step before embedding that quietly decides whether the whole system works. Chunking. Today, we unpack it. Bad chunking ruins even the best embedding model and the fanciest vector DB.

This blog post is a daily learning summary of my 40-day RAG class from Syed Jaffer of Parotta Salna.

Terms Used Today

- Chunking: Splitting a long document into smaller pieces before embedding.

- Chunk: One of those smaller pieces. Usually a paragraph or a few sentences.

- Fixed-size chunking: Splitting the text into equal pieces (e.g., 500 tokens each), regardless of meaning.

- Overlapping (sliding-window) chunking: Same as fixed-size, but each chunk shares a few tokens with the next.

- Semantic chunking: Splitting where the meaning changes, not at arbitrary intervals.

- Recursive splitting: A practical middle ground that tries paragraph, then sentence, then word boundaries in order.

- Token: The unit LLMs and embedding models actually count. Roughly four characters of English text per token.

1. Why Chunk At All?

Why not just embed the entire document as a single giant vector? Three reasons.

- Context window limits. LLMs cannot read a 200-page PDF in a single prompt.

- Retrieval precision. A "refund policy" question needs one paragraph, not the whole handbook.

- Embedding quality. Embedding a whole book averages it into vague mush. Smaller pieces give sharper meaning.

So we split. The question is how.

2. The Chunking Tradeoff:

Every chunking strategy fights the same tradeoff.

- Too small. Loses context. Pronouns lose their referents.

- Too big. Multiple ideas dilute each other; retrieval gets noisy.

- Just right. One coherent idea per chunk.

There is no universal "correct" size, but there are sensible defaults.

3. Fixed-Size Chunking:

The simplest approach. Just split the text into equal pieces (e.g., 500 tokens each).

Take this document: "The quick brown fox jumps over the lazy dog. The dog barked..."

A naïve fixed-size cut at, say, 40 characters might give:

- Chunk 1: "The quick brown fox jumps over the lazy do"

- Chunk 2: "g. The dog barked..."

That second chunk is a disaster. We have just cut a word in half.

Fixed size is dead simple, but it cuts mid-sentence, mid-word, mid-thought.

Tip: Always count by tokens, not characters. Tokens are how LLMs and embedding models actually perceive text.

4. Overlapping Chunks (Sliding Window):

Same as fixed-size, but each chunk overlaps the next. If an important sentence falls right at a boundary, it still appears fully in at least one chunk. A safety net.

A common setting: 500-token chunks with 50–100 token overlap.

The good. Preserves context across boundaries. The best beginner default.

The cost. Slightly more storage, since the overlap is embedded twice.

5. Semantic Chunking:

Split where the meaning changes, not at arbitrary intervals. We break the doc into sentences, embed each one, and start a new chunk whenever similarity to the previous sentence drops sharply (a topic shift).

For example:

- "The Roman Empire began in 27 BC." (topic: Rome)

- "Augustus became its first emperor" (still Rome)

- "Meanwhile, in China, the Han dynasty..." (topic shift, new chunk)

The good. Highest-quality, most coherent chunks.

The cost. Slower and more expensive, since we have to embed everything just to figure out where to split. Day 4 will dig into semantic chunking with working code.

6. Recursive Splitting (The Sweet Spot):

The default in libraries like LangChain. It tries to split on natural separators in order of preference: paragraphs → sentences → words → characters.

Almost as fast as fixed-size and far smarter. Try this before reaching for semantic chunking. It is a sweet spot for most projects.

7. A Practical Starter Recipe:

If we are building our first RAG system tomorrow:

- Strategy: Recursive splitting (or fixed-size + overlap).

- Chunk size: 500 tokens.

- Overlap: 50–100 tokens.

- Pre-clean: Strip headers, footers, and page numbers.

- Sanity check: Print 10 random chunks. Do they make sense on their own?

Iterate from there based on actual retrieval results.

8. Common Beginner Mistakes:

- Splitting by character count instead of tokens. Token counts are what actually matter to the model.

- No overlap on dense technical content. Adjacent sentences reference each other constantly. Without overlap, those connections vanish.

- Never inspecting actual chunks. Always print samples before embedding. We will catch most issues in 30 seconds.

9. If This Came In An Interview:

- Why do we chunk documents? Three reasons. LLM context windows are limited. Smaller chunks improve retrieval precision; embeddings get vague on very long text.

- Fixed-size vs overlapping vs semantic chunking? Fixed-size equal pieces (fast but breaks sentences). Overlapping is fixed-size with a small overlap (best beginner default). Semantic splits at meaning shifts (highest quality, more expensive).

- What is a sensible default? Recursive splitting (or fixed-size + overlap) at ~500 tokens with ~50–100 token overlap.

- Why use overlap? So information that lands near a chunk boundary still appears fully in at least one chunk.

- Why count tokens instead of characters? Tokens are how LLMs and embedding models actually count text. Character counts can wildly mislead.

10. Summing It Up:

If we remember one thing from today, it is this: chunking is where data prep meets retrieval quality. Start simple with fixed-size + overlap or recursive splitting, inspect actual chunks, and only get fancier if results demand it.

Coming Up on Day 4

We named semantic chunking today, but only glanced at it. Tomorrow, we slow down on the recipe. Working code with sentence-transformers, where the similarity threshold actually comes from, and when the smarter (and pricier) strategy is worth it.

That's all for today. Let's meet up again tomorrow with Day 4.

Thanks for reading.

Cheers!