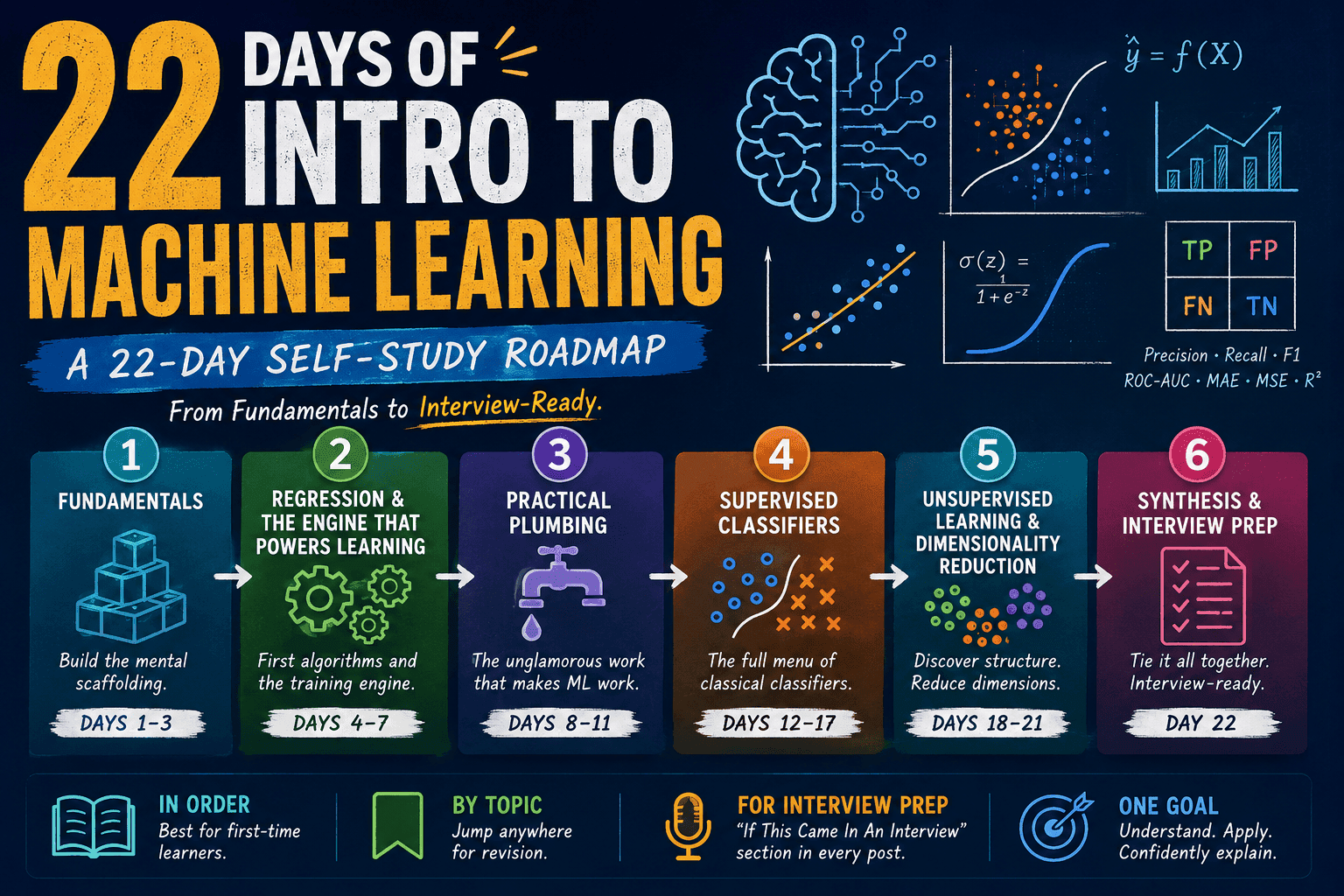

22 Days of Intro to Machine Learning Series

This is the intro post for my 22 days of Machine Learning self-study series.

Parathan Thiyagalingam

Parathan Thiyagalingam

This is the index for my twenty-two day Machine Learning self-study series. The plan was simple. To understand the building blocks of classical ML in a beginner-friendly way, and to be confident enough to discuss them in an interview or at work without feeling lost.

If you are reading these for the first time, the best path is to go in order. Each day picks up where the previous one left off, and the concepts build on each other. If you are using these as interview prep, you can also jump straight to any algorithm post. Every algorithm day has an "If This Came In An Interview" section with the most-asked questions and one-line answers.

This blog post is a daily learning summary of my ML self-study.

1. Fundamentals (Days 1–3):

The mental scaffolding for everything that follows. Start here.

- Day 1: What ML is, supervised vs unsupervised vs reinforcement, and the X / y / ŷ vocabulary.

- Day 2: Why we split data, why a single split is not enough, and what overfitting actually looks like.

- Day 3: The seesaw between being too simple and being too sensitive. The single most important conceptual question in ML.

2. Regression & The Engine That Powers Learning (Days 4–7):

The first real algorithms, and a peek under the hood at how training actually works.

- Day 4: The simplest model, and a stepping stone to almost every other algorithm.

- Day 5: The classification workhorse, plus the famous sigmoid curve.

- Day 6: Precision, recall, F1, ROC-AUC, MAE, MSE, R², and why accuracy lies.

- Day 7: The optimisation engine that powers nearly every modern ML algorithm.

3. Practical Plumbing (Days 8–11):

The unglamorous work that decides whether a real-world ML project succeeds or fails.

- Day 8: Encoding, scaling, missing values, and which models need what.

- Day 9: Controlling variance by penalising large coefficients.

- Day 10: How to evaluate honestly and pick the best settings.

- Day 11: Handling the rare-event problems that dominate the real world.

4. Supervised Classifiers (Days 12–17):

The full menu of classical classification algorithms, from the friendliest to the heaviest.

- Day 12: No training, just memory and distance.

- Day 13: Probability theory and the world's most-used spam filter.

- Day 14: The most interpretable classifier of all.

- Day 15: Many mediocre trees voting beats one great tree.

- Day 16: The algorithm that has dominated tabular ML for over a decade.

- Day 17: Pure geometry, the kernel trick, and the king of classification before deep learning arrived.

5. Unsupervised Learning & Dimensionality Reduction (Days 18–21):

Working with data that has no answer key, and squashing dimensions without losing meaning.

- Day 18: The most famous clustering algorithm.

- Day 19: A tree of all possible groupings, cut wherever we want.

- Day 20: Clusters of any shape, plus automatic outlier detection.

- Day 21: The most useful tool for handling high-dimensional data.

6. Synthesis & Interview Prep (Day 22):

The wrap-up that ties everything together.

- Day 22: The end-to-end ML workflow, data leakage gotchas, and a comprehensive interview "why" cheat sheet covering the whole series.

7. How to Read These Notes:

A few small suggestions, based on how I wrote them.

- In order, for first-time learners. Each post builds on the previous ones. Concepts are introduced once and reused with wikilinks throughout.

- By topic, for revision. Each post is self-contained enough to land alone. Use the "Terms Used Today" glossary at the top of each post to orient.

- For interview prep. Jump to the "If This Came In An Interview" section near the end of any algorithm or framework post. Day 22 collects every "why" question from the series in one place.

That's the whole series. Twenty-two days. From "what is ML?" to interview-ready.

Thanks for reading.

Cheers!